20 Biggest Mistakes SEOs Make in Regards to Their Sites

While SEO is no rocket science, it's a highly dynamic niche where keeping your finger on the industry's pulse is important. At the same time, not being familiar with the latest SEO trends - such as not knowing Google just released Penguin 3.0 - may cost you, making your SEO efforts less effective and causing you fall behind the competition.

1. Thinking "SEO keywords"

Previously, SEOs used keywords for which they SEO'ed their websites. Although keyword research is still important, investing all you fortune into a limited group of search terms is a matter of the past. After the Hummingbird update, Google started rewriting queries at will, like in this example:

Google won't trust you have a tiger in the cage just because the sign says TIGER. Hence, not only should you optimize the site for popular search terms, but also use synonyms and related keywords.

Besides, being mentioned alongside niche competitors is believed to help Google attribute your site to a particular "keyword bucket", which could increase you rank for a wider array of words even though you don't have them on your site.

2. Not using structured markup

Another effect of the Hummingbird update was that it signified a conscious shift to the semantic Web on behalf of Google. Google has been transitioning to becoming and answer engine even before the Hummingbird update, but after this update it became official.

And, what makes your site more semantically clear is the use of structured (semantic) markup. If you use it, it'll be easier for search engines to identify different pieces of information in your code, and they will be more likely to accompany your links with all sorts of rich snippets in search results.

3. Handing over your keywords to competitors

Much has been said on the uselessness of meta keywords tags, but people still use them. Well, one important reason to avoid this page element is because it virtually turns in all your keywords to your competitors on a silver platter.

4. Misunderstanding "SEO competition"

I've heard some people say they look at Google AdWords stats to evaluate competition. But the HIGHs and LOWs you see in Google AdWords are just that - your PPC competition. Likewise, checking how many pages rank for a keyword in the SERPs tells you nothing.

The only effective way to assess SERP competition is by analyzing the top 10 pages that rank for your keywords. What's telling in this respect are the links pointing to those pages, their content optimization score, Domain Strength, and other factors. You can check these metrics one at a time or get them all in a click with Rank Tracker's Keyword Difficulty tool.

5. Using Flash

Sometimes people let their imagination run wild and ask the web designer to create some mind-blowing visual for they page. For example, an elephant walking into the room (if you're an interior design company).

Most likely, to achieve this effect your web developer will be forced to use Flash, and this may be bad for SEO. While search engines can't read Flash, having it on your site is not a brilliant idea (especially in the mobile era).

6. Thinking social is the new link building

Some people say one should forget all about links and focus on social instead. Social is the new link building, they say. But here's the thing: Google doesn't use social signals in their ranking algorithm, period.

People are often tempted to believe otherwise, because they've seen studies that claim a correlation between high rankings and a large number of social signals to the page (Google +1's in particular).

At the same time, Google have said many times they don't use Facebook or Twitter signals to determine a page's rank, because they can't crawl Facebook or Twitter deeply enough to be able to trust those signals. They had also advised SEOs against spamming their sites with Google +1's.

7. Measuring title/description length in characters

It has been long proven that Google can only allocate that many pixels of its SERP page real estate to display your page title. Yet some people in the SEO industry continue to measure title length in characters.

Well, if your title consists mostly of "iiiii" rather than "www", you'll be able to fit more characters into it without Google truncating the former. In March this year, Google began using 18-pixel Arial typeface for search results, so now industry pros generally recommend keeping the title under 512 pixels. By the way, you can measure your title using Pixel Ruler (Windows), Free Ruler (Mac) or a similar piece of software.

8. Not taking mobile seriously

As you may know, the Web is predominantly mobile nowadays: 60% of users prefer this method of getting online. So, if your site is not optimized for mobile, you may be missing out on a lot of potential traffic. First, because Google ranks unresponsive sites lower in mobile SERPs. And second, because Google now accompanies unresponsive sites with an unsightly message that reads "May not work on your device".

9. Underestimating the value of site audits

Unless you have a one-page website, it's important to routinely audit your site to reveal any crawlability, accessibility and indexing problems before they cause trouble.

There are tools like WebSite Auditor that let you analyze even the local copy of your site before you publish it to the Web. Thing is, sometimes people forget they implemented a noindex tag on some URL they're now reusing for another purpose. Or that there is internal duplicate content on the site - a site audit lets you discover instances like that.

10. Not watching search trends

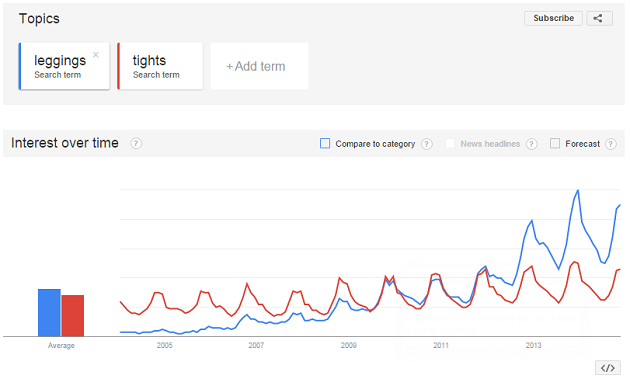

100 years ago people could have searched for automobiles, and nowadays they search for cars. Search trends change all the time, with new keywords entering the arena and no-longer-popular search terms leaving it.

You can use Google Trends to track search tendencies and volumes:

By the way, Rank Tracker has Google Trends integrated into it Keyword Research tool.

11. Ignoring the power of XML Sitemap

An XML Sitemap helps search engines in many ways: it tells them which versions of your URLs should be included into their indices, how frequently they should crawl your site. It also informs them of new URLs that appeared on your site and gives them an idea of your site's structure.

Hence, having a full XML Sitemap and keeping it up to date can significantly increase your site's chances of high rankings.

12. Not re-writing their URLs

It is considered best SEO practice to clean-code your site's URLs (unless your site software does that for you). That is, instead of using meaningless symbols and numbers in URLs, use keywords. This will make your URLs not only search engine-friendly, but also easier to remember for users.

Default URL: example.com/page.php?category=7&product=18

Clean-coded URL: example.com/watch/TAGHeuer/

13. Being blissfully unaware of search personalization

These days, Google and other big search engine provide search results not only based on the keywords you type in. They also consider your language and location, your search history and your social media connections (which is particularly true of Google+) to return the most relevant search results possible.

So, not taking that into account when tracking website rankings is likely to skew your vision of where your site ranks for different online groups.

14. Using tags and tag clouds

Tagging your content using each and every keyword you can think of is a bad idea and here's why. First, tags lead to internal duplicate content. Then, if you have a tag cloud and it's rather extensive, this can be viewed as keyword stuffing in link anchor texts.

Unless you really must use tags, consider using categories instead (even Matt Cutts uses categories on his blog) or at least close your tags from indexing.

15. Having a Welcome page

I still see websites using Welcome/Enter pages to salute their visitors on arrival. At the same time, such pages are absolutely redundant. Not only do they add to your site's loading time, but they are also not favored by search engines.

When a search bot gets to your site, it wants to understand what it's dealing with as quickly as possible. If it sees a Welcome/Enter page instead of your content-rich homepage, this is akin to you calling a phone number and getting the annoying "to speak to a customer service representative, press 0". I mean, aren't you calling because you want to speak to someone in the first place?

16. Not having a strategy for internal linking

In the post-Penguin world, internal linking is one of the few link building methods left to SEOs. Interlinking pages of your site using relevant anchor texts can work wonders. The idea is not to overdo, of course, and to diversify the anchor texts used.

17. Thinking negative SEO is a joke

If you think negative SEO is like Mr. Grinch - everyone's talking about it, yet it doesn't exist - you couldn't be more wrong! There are tons of real-world examples when negative SEO has caused many a folk real trouble. So, it's best to check backlinks to your site on a regular basis and disavow any suspicious, low-quality links right away.

18. Not trying to improve site load speed

Page load speed is now officially a rankings factor, and it has even more weight for mobile. Although most web developers implement caching, gzip compression and other speed optimization must-dos, unaware content creators often forget to crop and resize images or even commit the most foolish speed optimization sin of all time - they resize overly large images by adjusting the dimensions directly in the content management system!

19. Not realizing it's all about the brand now

OK, here comes the letting-the-cat-out-of-the-bag moment of this post: brand building may well replace SEO in the future. With entity graph building, semantic web and more insight into what online businesses are about, Google doesn't view your website as a repository of relevant content anymore - it considers it an online avatar of your real-world company. The more real-worldly your company appears online and the stronger your band is, the better.

20. Not seeing the big picture!

Some 10 years ago, SEO was a purely technical thing: all one needed to do was use the right keywords in the right places, exchange or buy some links and, voila, one could make the site rank.

Then came the necessity to create more compelling content. Then the necessity to make sites mobile-friendly and the need to optimize for the semantic web. Then the need to attract earned media and to do good old PR, because pre-Penguin link building simply stopped working.

SEO in its pure, original form has become reduced to just a small portion of what helps the site rank. Hence, it's important to see how it fits into the larger marketing and web development landscape to make it work.

That is all, thanks for reading. Questions and comments are welcome!